Memory Leaks Demystified

Tracking down memory leaks in Node.js has been a recurring topic, people are always interested in learning more about due to the complexity and the range of causes.

Not all memory leaks are immediately obvious - quite the opposite; however once we identify a pattern, we must look for a correlation between memory usage, objects held in memory and response time. When examining objects, look into how many of them are collected, and whether any of them are usual, depending on the framework or technique used to serve the content (ex. Server Side Rendering). Hopefully, after you finish this article, you'll be able to understand, and look for a strategy to debug the memory consumption of a Node.js application.

Garbage Collection Theory in Node.js

JavaScript is a garbage collected language and Google’s V8 is a JavaScript engine, initially created for Google Chrome, that can be used as a standalone runtime in many instances. Two important operations of the Garbage Collector in Node.js are:

- identify live or dead objects and

- recycle/reuse the memory occupied by dead objects.

Something important to keep in mind: When the Garbage Collector runs, it pauses your application entirely until it finishes its work. As such you will need to minimize its work by taking care of your objects’ references.

All memory used by a Node.js process is being automatically allocated and de-allocated by the V8 JavaScript engine. Let’s see how this looks in practice.

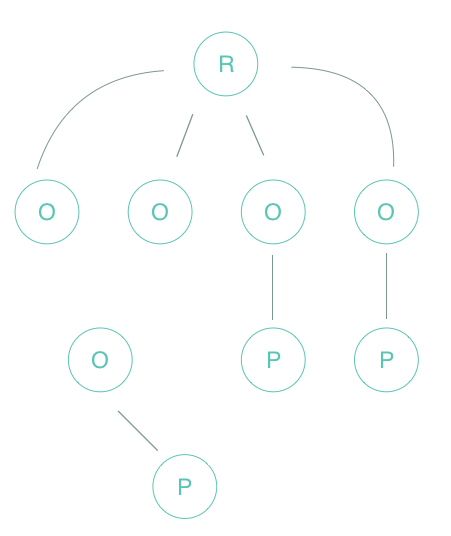

If you think of memory as a graph, then imagine V8 keeping a graph of all variables in the program, starting from the ‘Root node’. This could be your window or the global object in a Node.js module, usually known as the dominator. Something important to keep in mind is you don’t control how this Root node is de-allocated.

Next, you’ll find an Object node, usually known as leaves (there are no child references). Finally, there are 4 types of data types in JavaScript: Boolean, String, Number, and Object.

V8 will walk through the graph and try to identify groups of data that can no longer be reached from the Root node. If it’s not reachable from the Root node, V8 assumes that the data is no longer used and releases the memory. Remember: to determine whether an object is live, it is necessary to check if is reachable through some chain of pointers from an object which is live by definition; everything else, such as an object being unreachable from a root node or not referenceable by a root node or another live object is considered garbage.

In a nutshell, the garbage collector has two main tasks;

- trace and

- count references between objects.

It can get tricky when you need to track remote references from another process, but in Node.js applications, we use a single process which makes our life a bit easier.

V8’s Memory Scheme

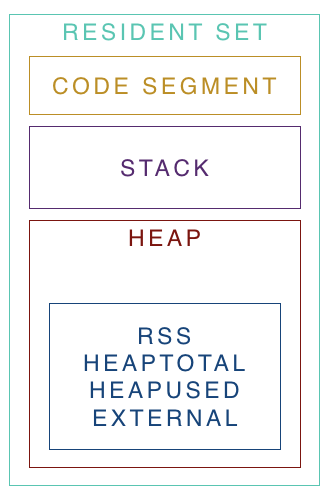

V8 uses a scheme similar to the Java Virtual Machine and divides the memory into segments. The thing that wraps the scheme concept is known as Resident Set, which refers to the portion of memory occupied by a process that is held in the RAM.

Inside the Resident Set you will find:

- Code Segment: Where the actual code is being executed.

- Stack: Contains local variables and all value types with pointers referencing objects on the heap or defining the control flow of the application.

- Heap: A memory segment dedicated to storing reference types like objects, strings and closures.

Two more important things to keep in mind:

- Shallow size of an object: the size of memory that is held by the object itself

- Retained size of an object: the size of the memory that is freed up once the object is deleted along with its' dependent objects

Node.js has an object describing the memory usage of the Node.js process measured in bytes. Inside the object you’ll find:

- rss: Refers to resident set size.

- heapTotal and heapUsed: Refers to V8's memory usage.

- external: refers to the memory usage of C++ objects bound to JavaScript objects managed by V8.

Finding the leak

Chrome DevTools is a great tool that can be used to diagnose memory leaks in Node.js applications via remote debugging. Other tools exist and they will give you the similar. This blog post relies on one of those different tools in order to give you a clear clear understanding of what is happening. However, you need to keep in mind that profiling is an intensive CPU task, which can impact your application negatively. Be aware!

The Node.js application we are going to profile is a simple HTTP API Server that has multiple endpoints, returning different information to whoever is consuming the service. You can clone the repository of the Node.js application used here.

const http = require('http')

const leak = []

function requestListener(req, res) {

if (req.url === '/now') {

let resp = JSON.stringify({ now: new Date() })

leak.push(JSON.parse(resp))

res.writeHead(200, { 'Content-Type': 'application/json' })

res.write(resp)

res.end()

} else if (req.url === '/getSushi') {

function importantMath() {

let endTime = Date.now() + (5 * 1000);

while (Date.now() < endTime) {

Math.random();

}

}

function theSushiTable() {

return new Promise(resolve => {

resolve('🍣');

});

}

async function getSushi() {

let sushi = await theSushiTable();

res.writeHead(200, { 'Content-Type': 'text/html; charset=utf-8' })

res.write(`Enjoy! ${sushi}`);

res.end()

}

getSushi()

importantMath()

} else {

res.end('Invalid request')

}

}

const server = http.createServer(requestListener)

server.listen(process.env.PORT || 3000)

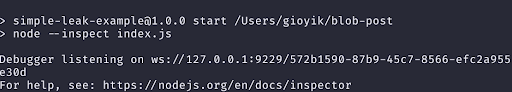

Start the Node.js application:

We have been using a 3S (3 Snapshot) approach to diagnostics and identify possible memory issues. Interesting enough, we found this was an approach that has been used by Loreena Lee at the Gmail team for a long time to solve memory issues. A walkthrough for this approach:

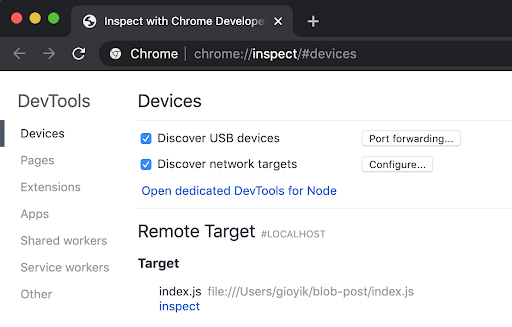

- Open Chrome DevTools and visit

chrome://inspect. - Click on the

inspectbutton from one of your applications in the Remote Target section located at the bottom.

Note: Make sure you have the Inspector attached to the Node.js application you want to profile. You can also connect to Chrome DevTools using ndb .

You are going to see a Debugger Connected message in the output of your console when the app is running.

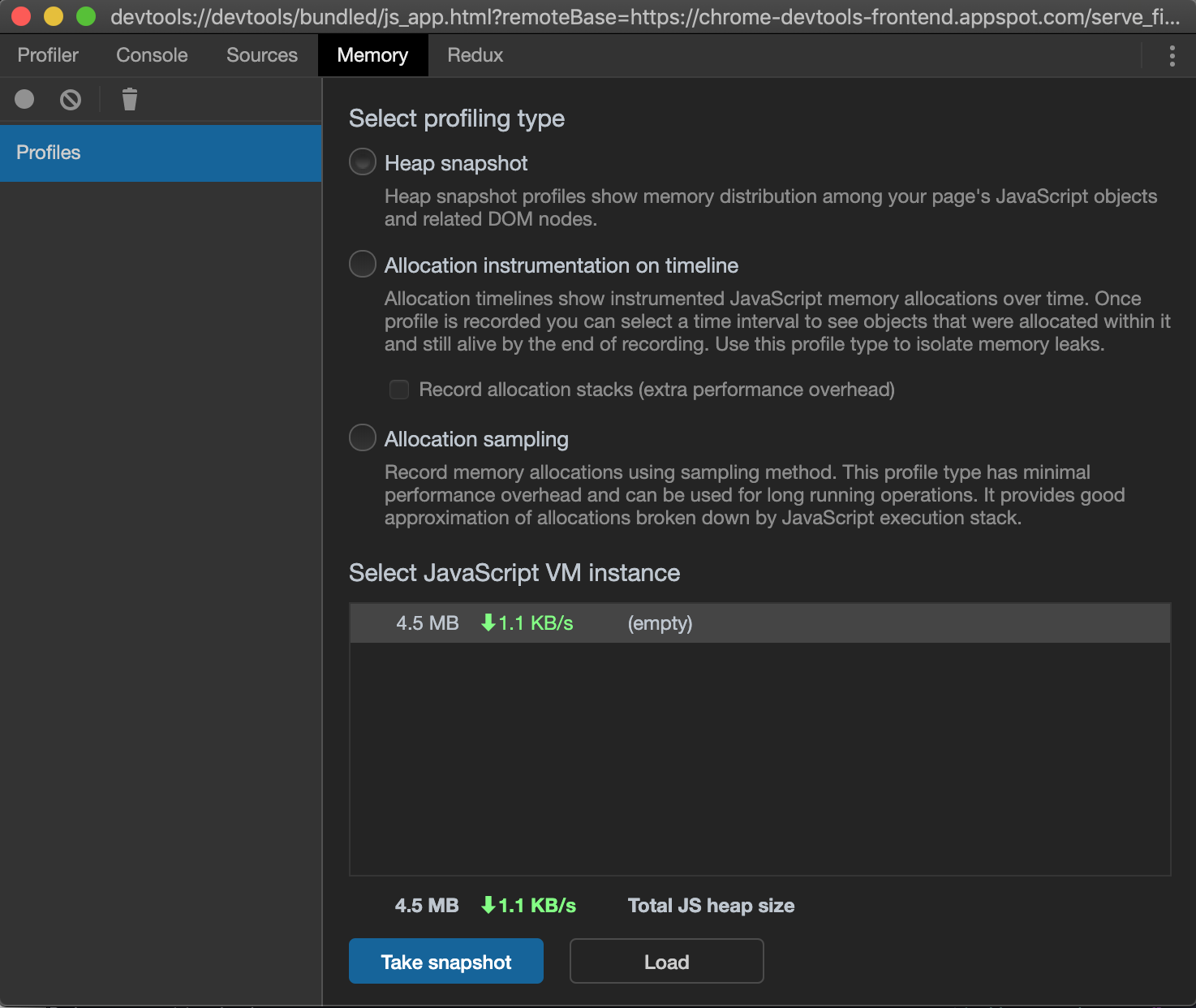

- Go to Chrome DevTools > Memory

- Take a heap snapshot

In this case, we took the first snapshot without any load or processing being done by the service. This a tip for certain use-cases: it’s fine if we are completely sure the application doesn’t require any warm up before accepting request or do some processing. Sometimes it makes sense to do a warm-up action before taking the first heap snapshot as there are cases where you might be doing lazy initialization for global variables on the first invocation.

- Perform the action in your app that you think is causing leaks in memory.

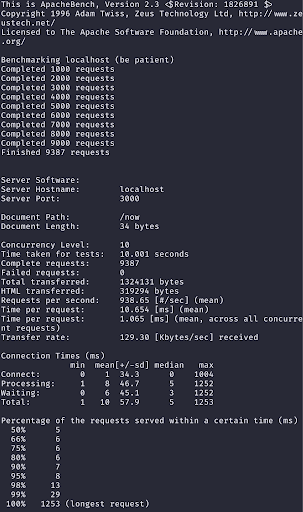

In this case we are going to run npm run load-mem. This will start ab to simulate traffic/load in your Node.js application.

- Take a heap snapshot

- Again, perform the action in your app that you think is causing leaks in memory.

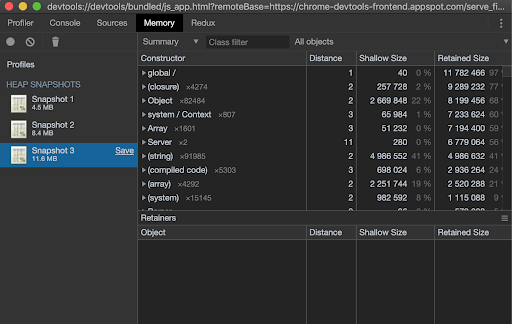

- Take a final heap snapshot

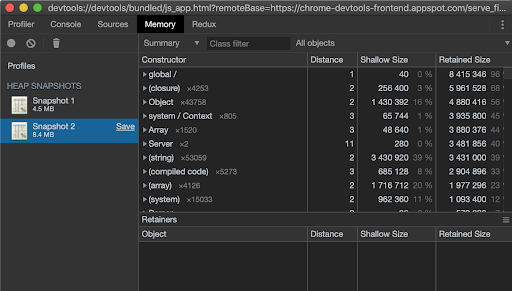

- Select the latest snapshot taken.

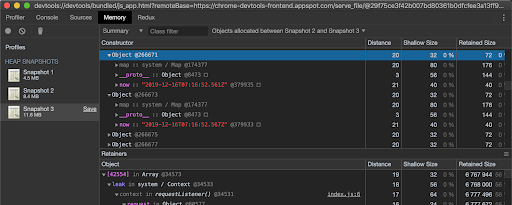

- At the top of the window, find the drop-down that says “All objects” and switch this to “Objects allocated between snapshots 1 and 2”. (You can also do the same for 2 and 3 if needed). This will substantially cut down on the number of objects that you see.

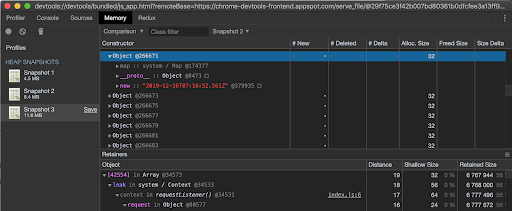

The Comparison view can help you identify those Objects too:

In the view you’ll see a list of leaked objects that are still hanging around, top level entries (a row per constructor), columns for distance of the object to the GC root, number of object instances, shallow size and retained size. You can select one to see what is being retained in its retaining tree. A good rule of thumb is to first ignore the items wrapped in parenthesis as those are built-in structures. The @ character is objects’ unique ID, allowing you to compare heap snapshots on per-object basis.

A typical memory leak might retain a reference to an object that’s expected to only last during one request cycle by accidentally storing a reference to it in a global object that cannot be garbage collected.

This example generates a random object with the date timestamp when the request was made to imitate an application object that might be returned from an API query and purposefully leak it by storing it in a global array. Looking at a couple of the retained Object’s you can see some examples of the data that has been leaked, which you can use to track down the leak in your application.

NSolid is great for this type of use-case, because it gives you a really good insight of how memory is increasing on every task or load-test you perform. You can also see in real time how every profiling action impacts CPU if you were curious.

In real world situations, memory leaks happen when you are not looking at the tool you use to monitor your application, something great about NSolid is the ability to set thresholds and limits for different metrics of your application. For example, you can set NSolid to take a heap snapshot if more than X amount of memory is being used or during X time memory hasn’t recovered from a high consumption spike. Sounds great, right?

Marking and Sweeping

V8’s garbage collector is mainly based on the Mark-Sweep collection algorithm which consists of tracing garbage collection that operates by marking reachable objects, then sweeping over memory and recycling objects that are unmarked (which must be unreachable), putting them on a free list. This is also known as a generational garbage collector where objects may move within the young generation, from the young to the old generation, and within the old generation.

Moving objects is expensive since the underlying memory of objects needs to be copied to new locations and the pointers to those objects are also subject to updating.

For mere mortals, this could be translated to:

V8 looks recursively for all objects’ reference paths to the Root node. For example: In JavaScript, the "window" object is an example of a global variable that can act as a Root. The window object is always present, so the garbage collector can consider it and all of its children to be always present (i.e. not garbage). If any reference has no path to the Root node. especially when it looks for unreferenced objects recursively, it will be marked as garbage and will be swept later to free that memory and return it to the OS.

However, modern garbage collectors improve on this algorithm in different ways, but the essence is the same: reachable pieces of memory are marked as such and the rest is considered garbage.

Remember, everything that can be reached from a Root is not considered garbage. Unwanted references are variables kept somewhere in the code that will not be used anymore and point to a piece of memory that could otherwise be freed, so to understand the most common leaks in JavaScript, we need to know the ways references are commonly forgotten.

The Orinoco Garbage Collector

Orinoco is the codename of the latest GC project to make use of the latest and greatest parallel, incremental and concurrent technique for garbage collection, featuring the ability to free the main thread. One of the significant metrics describing Orinoco’s performance is how often and how long the main thread pauses while the garbage collector performs its functions. For classic ‘stop-the-world’ collectors, these time-intervals impact the application’s user experience due to delays, poor-quality rendering, and an increase in response time.

V8 distributes the work of garbage collection between auxiliary streams in young memory (scavenging). Each stream receives a set of pointers, followed by moving all living objects into “to-space”.

When moving objects into ‘to-space’, threads need to synchronize through atomic read / write / compare and swap operations to avoid a situation where, for example, another thread found the same object, but followed a different path, and tries to move it.

Quote from V8 page:

Adding parallel, incremental and concurrent techniques to the existing GC was a multi-year effort, but has paid off, moving a lot of work to background tasks. It has drastically improved pause times, latency, and page load, making animation, scrolling, and user interaction much smoother. The parallel Scavenger has reduced the main thread young generation garbage collection total time by about 20%–50%, depending on the workload. Idle-time GC can reduce Gmail’s JavaScript heap memory by 45% when it is idle. Concurrent marking and sweeping has reduced pause times in heavy WebGL games by up to 50%.

The Mark-Evacuate collector consists of three phases: marking, copying, and updating pointers. To avoid sweeping pages in the young generation to maintain free lists, the young generation is still maintained using a semi-space that is always kept compact by copying live objects into “to-space” during garbage collection. It's advantage being parallel is that ‘exact liveness’ information is available. This information can be used to avoid copying by just moving and relinking pages that contain mostly live objects, which is also performed by the full Mark-Sweep-Compact collector. It works by marking live objects in the heap in the same fashion as the mark-sweep algorithm, meaning the heap will often be fragmented. V8 currently ships with the parallel Scavenger which reduces the main thread young generation garbage collection total time by about 20%–50% across a large set of benchmarks.

Everything related to pausing of the main thread, response time and page load has significantly improved, which allows animations, scrolling and user interaction on the page to be much smoother. The parallel collector made it possible to reduce the total duration of processing of young memory by 20–50%, depending on the load. However, the work is not over: Reducing pauses remains an important task to simplify the lives of web users, and we continue to look for the possibility of using more advanced techniques to achieve the goal.

Conclusions

Most developers don’t need to think about GC when developing JavaScript programs, but understanding some of the internals can help you think about memory usage and helpful programming patterns. For example, given the structure of the heap in V8, based on generations, low-living objects are actually quite cheap in terms of GC, since we pay mainly for the surviving objects. This kind of pattern is not only particular to JavaScript but also to many languages with garbage collection support.

Main Takeaways:

- Do not use outdated or deprecated packages like node-memwatch, node-inspector or v8-profiler to inspect and learn about memory. Everything you need is already integrated in the Node.js binary (especially a node.js inspector and debugger). If you need more specialized tooling, you can use NSolid, Chrome DevTools and other well known software.

- Consider where and when you trigger heap snapshots and CPU profiles. You will want to trigger both, mostly in testing, due to the intensity of CPU operations that are required to take a snapshot in production. Also, be sure of how many heap-dumps are fine to write out before shutting the process and causing a cold restart.

- There’s no one tool for everything. Test, measure, decide and resolve depending on the application. Choose the best tool for your architecture and the one that delivers more useful data to figure out the issue.

References

- Memory Management Reference

- Trash talk: the Orinoco garbage collector v8-perf

- Taming The Unicorn: Easing JavaScript Memory Profiling In Chrome DevTools

- JavaScript Memory Profiling

- Memory Analysis 101

- Memory Management Masterclass

- The Breakpoint Ep. 8: Memory Profiling with Chrome DevTools

- Thorsten Lorenz - Memory Profiling for Mere Mortals

- Eliminating Memory Leaks in Gmail